The Coming Divide in Defense: Federation or Fallout Under DoDI 5000.97

Share: The defense industry is entering a pivotal phase of transformation. With the release of DoDI 5000.97, the U.S. Department of Defense (DoD) has made

No-code integration platform for rich bi-directional sync

Zero downtime migration to tool of your choice

Keep Historical Data, Without Slowing Down Your Tools

Migrate or restructure Azure DevOps

Real-time, context-rich data lake for AI or analytics

By Role

Accelerate delivery with clear insights

Accelerate delivery with clear insights

Transform smarter with a connected digital thread

Confident transitions for every enterprise change

By Initiative

Operational readiness through connected engineering

Modernize and move to cloud without disruption

Build a compliant digital thread for complex environments

Build the foundation for smarter AI

By Domain

No-code integration across teams and systems

Enable collaboration between IT, support, and business teams

Connect PLM & engineering teams for smarter products

Ensure regulatory compliance from start to release

Explore the latest in technology best practices

Success stories from the field

Actionable insights for your business challenges.

See solutions in action

Learn, plan, and execute with confidence

Official announcements and updates

Join discussions that drive results

Stay ahead with curated insights

Share: The defense industry is entering a pivotal phase of transformation. With the release of DoDI 5000.97, the U.S. Department of Defense (DoD) has made

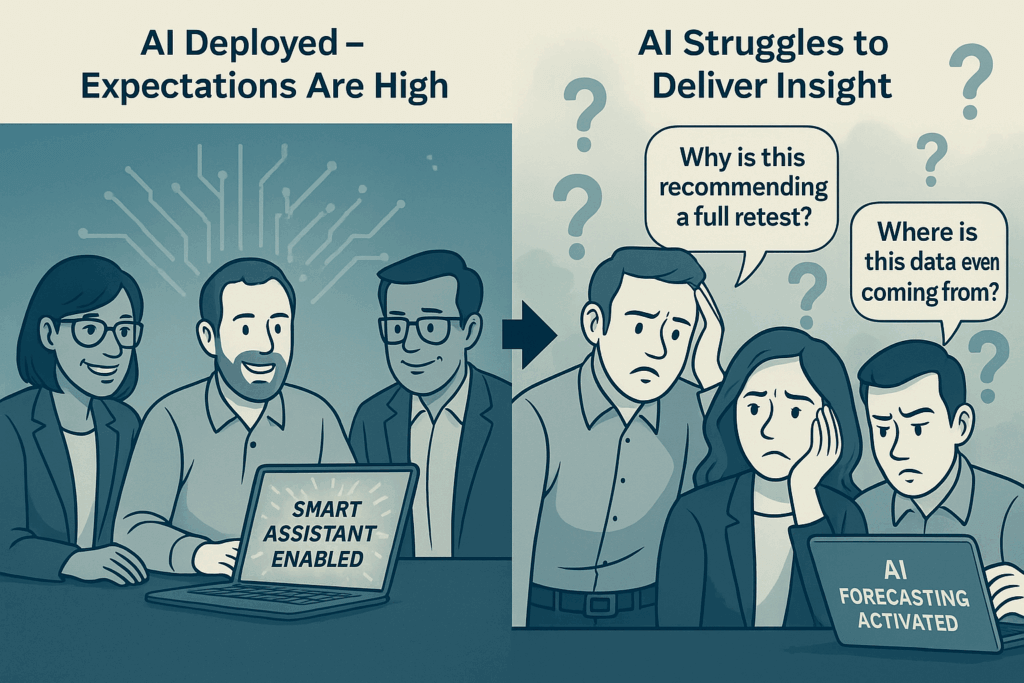

What started as a strategic bet becomes a stalled initiative. The board wants answers.

Team says:

“It’s the data. We’re working on it.”

But under the hood, the real issue is this:

The model is built, but it lacks access to the data foundation it depends on.

Executives everywhere are excited about AI – copilots, predictive analytics, autonomous workflows. But after the demos, the pilots, and the first wave of investment, something frustrating happens:

The results fall short. The impact feels…underwhelming.

So what’s holding it back?

Data Isn’t Decision-Grade

The challenge isn’t having too little data. It’s that so much of it ends up noisy, duplicated, outdated, or unlabeled. And when AI learns from the wrong signals, it naturally delivers the wrong answers.

A recent survey by NewVantage found 87% of organizations say data quality limits their AI success

Experimenting in Silos

Marketing is piloting generative AI. Engineering is trying code copilots. Support is running smart chatbots. But they all operate in isolation – without shared learning, governance, or strategy. There’s no compounding effect.

Models Don’t Understand the Business Context

AI can summarize. It can predict. But can it understand why that release is risky? Or why that spike in support tickets is tied to a specific product rollout? Without context, AI makes surface-level guesses – not strategic calls.

Governance Is Still an Afterthought

AI won’t work without knowing who owns the data, how it’s maintained, or what compliance standards it must meet. Without clear data governance, most AI projects hit a wall – or worse, become a liability.

There’s No Shared Definition of Success

Is the AI helping teams move faster? Is it improving accuracy? Reducing costs? Without shared KPIs across business, IT, and product teams, most pilots stay stuck in “interesting experiment” territory.

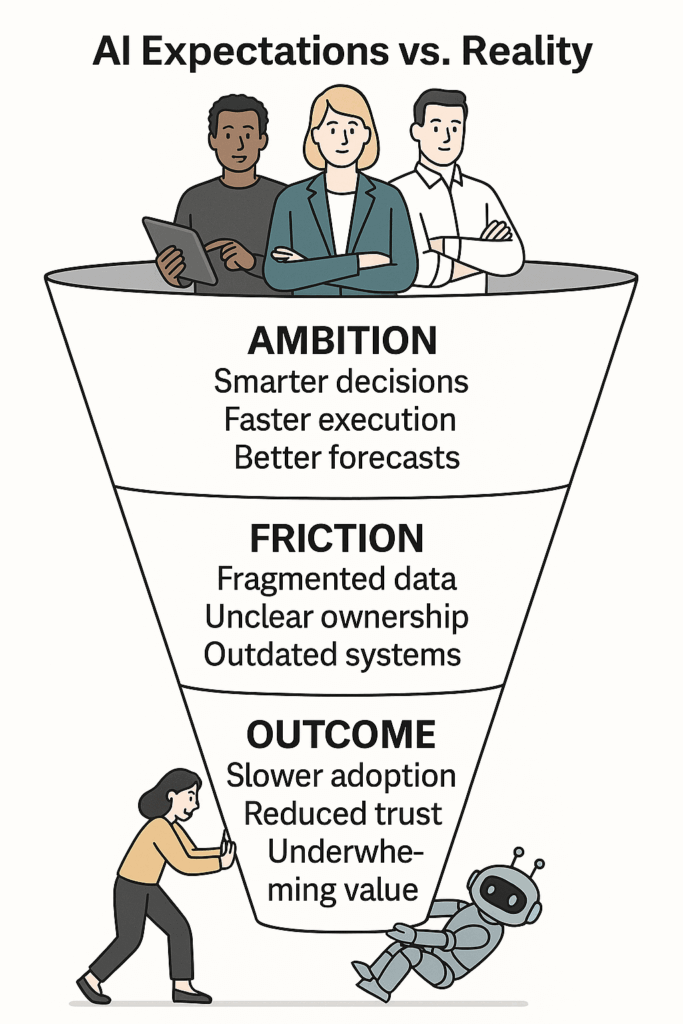

Even with the right intent, talent, and investment, AI still underwhelms. Not because teams are wrong – but because the environment hasn’t caught up.

AI Is Moving Faster Than the Systems Underneath

AI is working at the strategic level – copilots, automation, predictive analytics. But the underlying systems still operate in silos or on outdated architecture. The ambition is high, but the foundation wasn’t built to keep up with this pace.

Every Team Owns Data But No One Owns the Flow

Sales, product, ops – everyone manages their own data well. But no one owns the full journey from raw data to AI-ready insight. When bottlenecks show up, there’s no single owner just slowdowns that no one feels accountable for.

Yesterday’s Tools Are Quietly Setting Today’s Limits

The systems built years ago were the right call at the time. But now they’re creating friction. Workarounds pile up. Custom logic adds complexity. And before long, what was once a strength becomes a drag on progress.

AI Doesn’t Fail Loudly. It Just Stops Adding Value

When copilots make off-target suggestions or forecasts seem a bit off, teams lose confidence. They start validating outputs manually, creating side processes, or ignoring results altogether. It’s not a crash – it’s a quiet unraveling.

Fixing the Foundation Feels Risky So It Gets Deferred

Everyone sees the problem. But fixing it sounds like months of disruption, cost, and uncertainty. So, change gets pushed. And AI quietly stays underleveraged – not because of strategy, but because of inertia.

It’s not just the model. It’s the foundation they build around it.

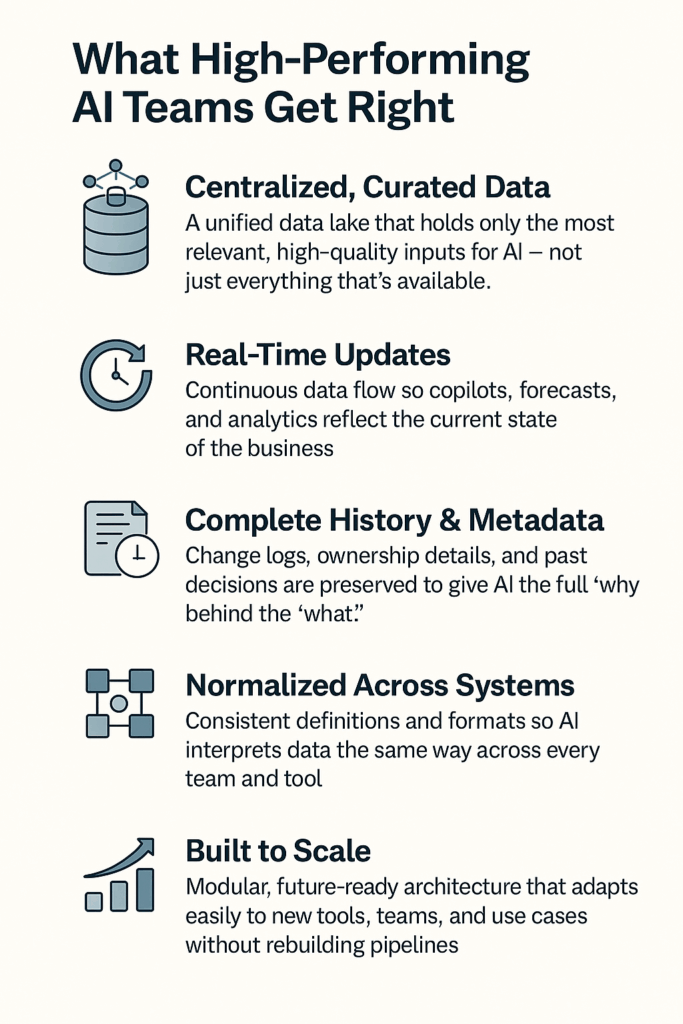

The best-performing enterprises don’t leave data to chance, they invest in the infrastructure that turns AI into impact.

Here’s what they consistently get right:

They centralize the right data (not just any data)

Top teams invest in a unified data lake that brings together the most critical inputs across functions. It’s not a dumping ground – it’s curated, purpose-built, and designed for AI to consume.

Their data is always up to date

No AI can make timely decisions on yesterday’s data. Leaders prioritize real-time sync so copilots, forecasts, and analytics operate with a live view of the business.

They preserve full history and metadata

Context matters and that means keeping more than just the latest version. These organizations retain change logs, ownership, and past decisions so AI doesn’t lose sight of the “why” behind the “what.”

When different systems speak different languages, AI guesses. Leaders build normalization pipelines so records mean the same thing everywhere reducing ambiguity and increasing reliability.

They plan for scale from day one

New tools. New teams. New use cases. High performers don’t rebuild pipelines every time something changes they architect with modularity and future growth in mind.

If AI isn’t delivering the answer isn’t always more modeling, more tuning, or more tools. The real unlock comes from creating the conditions where AI can thrive. That means shifting focus from the outputs to the inputs: the data itself.

For AI to perform at an expected level, teams need:

By now, most leaders have realized: AI performance isn’t just a matter of the model. It’s a function of everything around it – how data flows, how context is preserved, and how change is absorbed across systems.

That’s where OpsHub comes in.

Not as a dashboard. Not as another layer in the stack. But as the operational fabric that ensures AI has what it needs to succeed from day one, and every day after.

Here’s what that looks like in practice:

The Data, Whole and Alive

OpsHub doesn’t just move data; it preserves relationships, hierarchies, and change history across every source system. That means copilots, forecasters, and AI models get access to the full picture, in real time not fragments stitched together after the fact.

No More Guessing the “Why”

Context is what turns data into meaning. OpsHub ensures every requirement, test case, incident, or change is tied to its origin. So, when AI flags a risk or suggests a next step, team actually knows why and can trust the insight.

Make AI Work with What the Business Already Has

Enterprises don’t need to overhaul existing systems. OpsHub acts as a connective layer between current tools and the AI capabilities being introduced. It operates behind the scenes to establish consistency and context-without disrupting ongoing operations.

Adapt to Change Without Breaking the System

Business needs change. New tools come in. Teams restructure. OpsHub absorbs that change while ensuring that data flows without breaking the pipelines AI depends on.

Built for Governance, Not Just Speed

Speed matters. But so does safety. OpsHub ensures every action is auditable, access-controlled, and compliant. That’s especially critical when AI is making decisions on sensitive workflows.

For many enterprises, AI adoption hasn’t matched expectations. Instead of accelerating outcomes, it often introduces new complexity.

But the root cause is clear – and solvable.

The missing piece is a data foundation that AI can actually work with: clean, consistent, and continuously current.

OpsHub helps organizations put that foundation in place, across their existing environment.

Check your enterprise AI readiness in minutes with this checklist

Suhana works as an Associate Marketing Executive at OpsHub. She enjoys creating innovative content and social media strategies for tech-driven businesses, blending creativity with communication to fuel growth and build impactful connections.